AI · Built & Operated By Southeastern Technical

Everyone Has Cloud AI. Almost Nobody Has Local AI.

Three AI conversations matter for your business. How do we use cloud AI safely — ChatGPT, Claude, Microsoft Copilot, Gemini, Grok. Where is AI already exposing us — bias, data leakage, hallucinations, deepfake fraud, oversight gaps. And which workflows belong on our own hardware — running on a private AI server we own.

We help with all three. The third — designing, building, and operating local AI for SMBs — is what almost no other MSP can do.

Our Differentiator

We Support Every Major AI — And We Also Build Your Own.

We help our clients get the most out of ChatGPT, Claude, Microsoft Copilot, Gemini, Grok, and the other cloud AIs they're already paying for — picking the right tier, locking down data flows, writing the policies, and integrating tools where they actually fit. That work is the table stakes of modern IT.

What sets us apart is what comes next: we also design, build, and operate private AI servers — sitting in your building, running on your data, doing the in-between work your team has been buried under for years. The same architecture we run our own service desk on. Almost no other MSP can have that conversation with you.

See how Local AI works →Your data, your network

Zero exfiltration to a third-party model.

Unlimited throughput

Run the queue 24/7 with no per-token meter.

Eliminates the in-between work

The work no one had time to do — handled around the clock.

Custom-built & operated

Designed to your workflows. We run it.

The 10 AI Risk Areas Across 5 Categories

Sourced directly from our internal AI Risk Red-Yellow-Green Report. Click any section for a full breakdown — what the risk looks like in practice, and how we help you contain it.

Discrimination & Bias

AI systems can perpetuate or amplify biases present in their training data. Businesses carry legal liability under existing civil rights laws even when discrimination comes from an AI tool.

-

Unfair Discrimination & Bias

Read more →

Privacy & Security

Free-tier AI tools may retain and train on whatever you paste in. AI systems also introduce new attack vectors — prompt injection, shadow AI, and AI-generated phishing.

-

Data Privacy & AI Leakage

-

AI Security Vulnerabilities

Read more →

Misinformation

AI models hallucinate — they generate plausible-sounding but fabricated information with full confidence. Acting on unverified AI output creates real liability.

-

AI Hallucination & False Information

Read more →

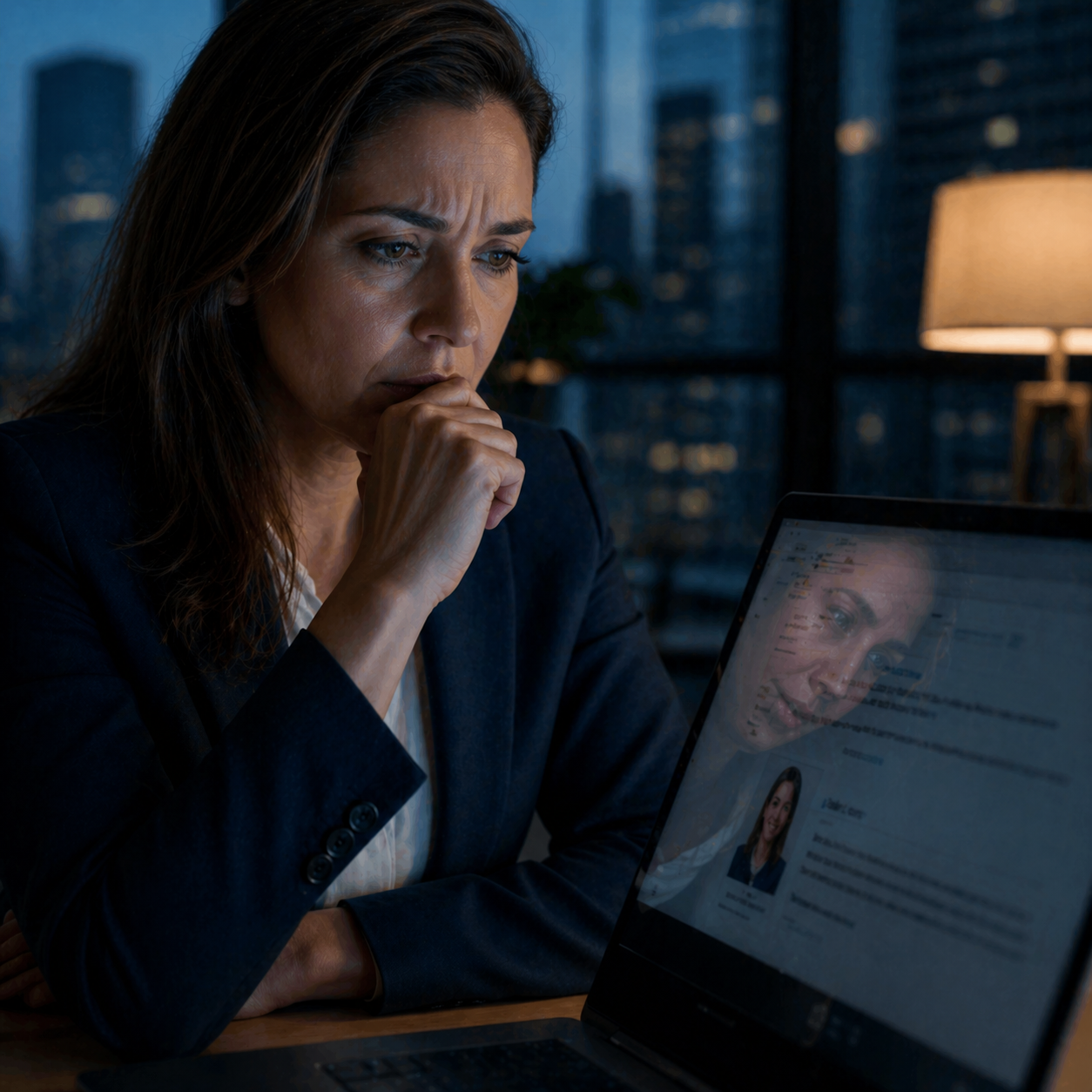

Malicious Use & Threats

AI has changed the cyber threat landscape. Voice cloning, deepfake video, AI-written phishing, and polymorphic malware all need AI-powered defense to keep up.

-

AI-Enhanced Cyber Threats

-

AI-Powered Fraud & Impersonation

Read more →

Human Oversight & Governance

AI should augment human decision-making, not replace it. Without clear boundaries, businesses face overreliance, regulatory exposure, and accountability gaps.

-

Overreliance on AI

-

Human Decision Authority

-

AI Reliability & Robustness

-

AI Transparency & Explainability

Read more →

Risk you can't see

Shadow AI tools, free-tier ChatGPT pasted with client data, voice-cloned vendors. Most exposure happens before policy ever catches up.

Productivity at stake

Done well, AI compounds your team's output. Done wrong, it erodes institutional knowledge and creates outage risk you can't recover from.

A clear path forward

We give you a Red-Yellow-Green grade across all 10 areas — with prioritized next steps and the policies, training, and tooling to get there.

Find Out Where Your Business Stands

Our free IT, AI & Cyber Assessment includes a full Red-Yellow-Green review of your AI risk surface — bias, data leakage, hallucinations, oversight, threat exposure — plus a Local-AI fit assessment for the workflows worth bringing in-house.

Schedule Your Free AssessmentOr call us directly: (678) 807-6156